The Future Is Here, Just Not Equally Distributed

William Gibson wrote that the future is already here — it is just not evenly distributed. He was describing the uneven geography of technology adoption, but he could not have anticipated how precisely the line would map onto artificial intelligence in 2026, or how steep the gradient would become.

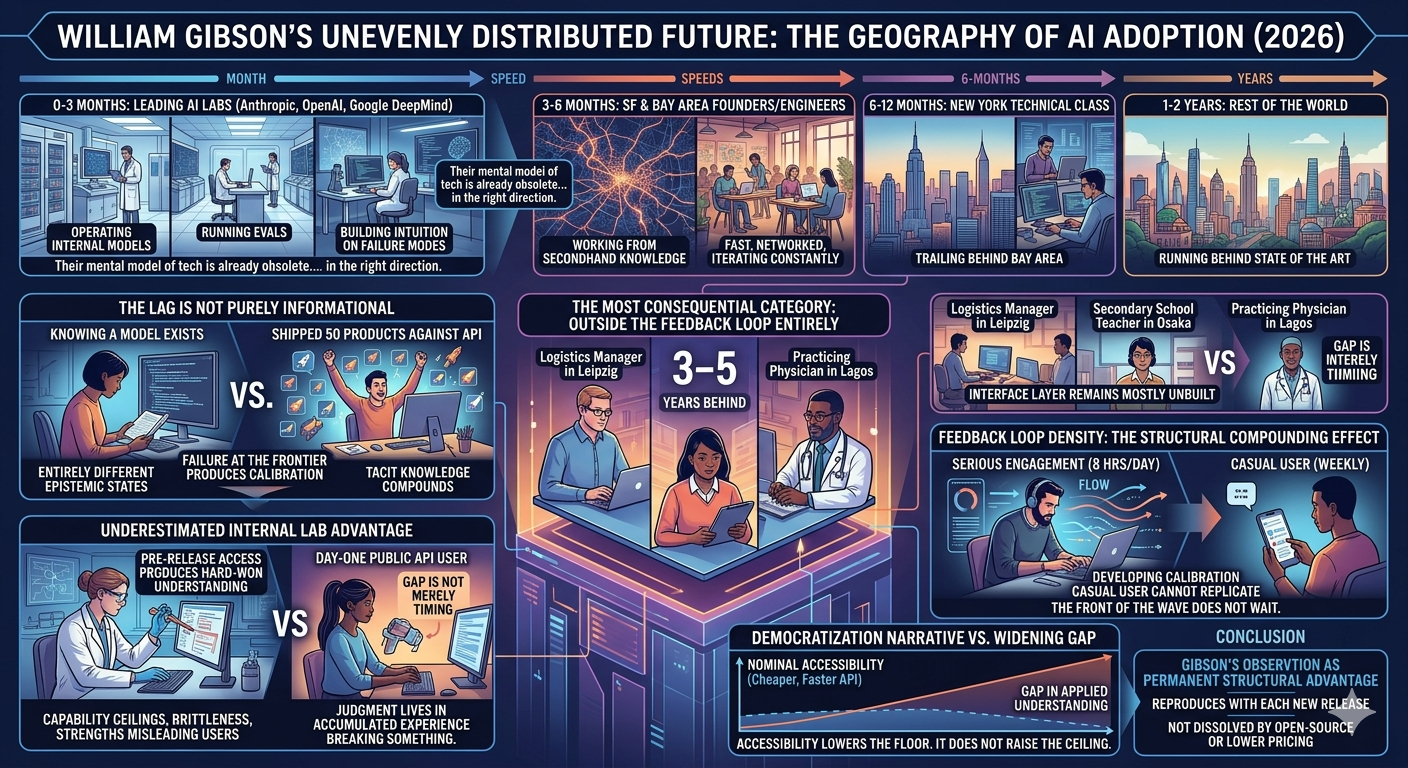

The distribution follows a rough but observable hierarchy. Researchers and engineers at major AI laboratories — Anthropic, OpenAI, Google DeepMind — are operating with internal models that will not reach the public for another three to four months. They are not reading about capability jumps; they are running evals against them, building intuition about failure modes, and quietly recalibrating their assumptions about what machines can and cannot do. By the time the API drops, their mental model of the technology is already obsolete in the right direction.

Below them sit the founders and engineers concentrated in San Francisco and the surrounding Bay Area, who follow within three to six months. They are fast, networked, and iterating constantly, but they are already working from secondhand knowledge of what the labs know. New York’s technical class trails by another six to twelve months. Beyond that — the rest of the world, which for the most part is running one to two years behind the state of the art, if it is meaningfully engaged with the technology at all.

The lag is not purely informational. That distinction matters. Knowing that a more capable model exists and having shipped fifty products against its API are entirely different epistemic states. The founders ahead of the curve are not simply better-read; they have already failed repeatedly with tools most people have not yet touched. Failure at the frontier produces calibration that no blog post, conference talk, or product demo can replicate. It is tacit knowledge, and it compounds.

The internal lab advantage is also likely underestimated from the outside. The gap between a researcher stress-testing a pre-release model and a startup founder using the day-one public API is not merely a matter of timing. Pre-release access produces hard-won understanding of capability ceilings, brittleness under adversarial prompting, and the specific ways in which a model’s strengths mislead users into overextending it. That understanding does not transfer cleanly through technical papers or release notes. It lives in the accumulated judgment of people who spent months breaking something before anyone else could use it.

The most consequential category, however, is not the gradient between labs and San Francisco. It is the enormous and largely static population that sits outside the feedback loop entirely. A logistics manager in Leipzig, a secondary school teacher in Osaka, a practicing physician in Lagos — they are not one or two years behind the state of the art. In terms of practical deployment affecting their daily work, they may be three to five years behind tools that already exist and could materially change what they do. The interface layer between frontier capability and functional workflow integration remains mostly unbuilt.

What makes the distribution persistently uneven is not geography or access in the simple sense. It is feedback loop density. Someone engaging seriously with AI systems for eight hours a day develops calibration that a casual weekly user cannot replicate regardless of nominal access to the same tools. The compounding effect is structural. The front of the wave does not wait.

The uncomfortable implication is that the gradient is not flattening at the rate the democratization narrative suggests. As models become more nominally accessible — cheaper, faster, available through consumer interfaces — the gap in applied understanding between the frontier and the median user may in fact be widening. Accessibility lowers the floor. It does not raise the ceiling, and it does not close the distance between someone who has been living inside a technology and someone who just learned it exists.

Gibson’s line was meant as an observation about latency, not hierarchy. But in AI’s current moment, it functions more accurately as a description of permanent structural advantage — one that reproduces itself with each new capability release, and that no amount of open-source tooling or lowered API pricing is likely to dissolve on its own.